Decision Trees

Understand decision trees deeply (Gini/entropy, split selection, overfitting, pruning) and be able to explain and debug tree behavior like a FAANG ML engineer.

Students can: - compute impurity and information gain, - implement a 1-level decision stump from scratch, - train a sklearn DecisionTree as a sanity check, - diagnose overfitting and data leakage risks.

Progress — 0/8 tasks

Interview Angles

- • Why can entropy be numerically unstable without eps?

- • How do you prevent overfitting in trees?

FAANG Gotchas

- • Candidate thresholds must be derived from sorted unique feature values (avoid meaningless thresholds)

- • Trees will happily learn leakage because they can memorize.

Asked At

Decision Trees — Student Lab

We start using sklearn in Week 4, but you’ll still implement core pieces from scratch.

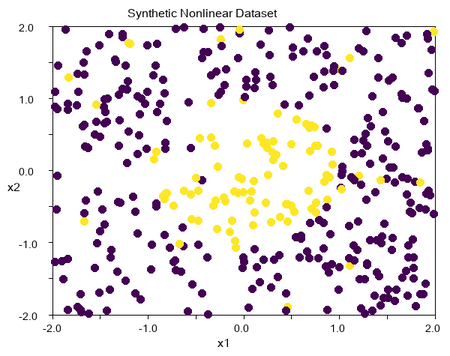

Section 0 — Synthetic dataset

We’ll create a non-linear boundary dataset to show how trees fit.

Figure: Synthetic Nonlinear Dataset

Impurity

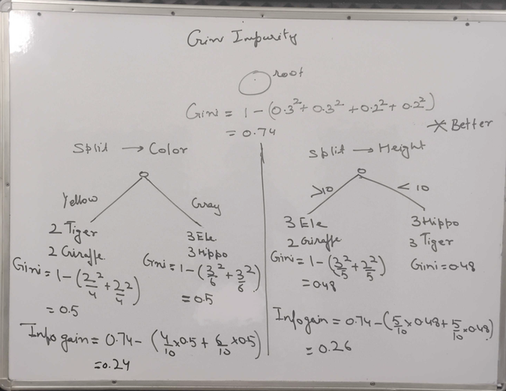

Section 1 — Impurity

Task 1.1: Gini impurity

Formula: Gini(S) = 1 - sum_k p_k^2

Binary case: Gini(S) = 1 - (p0^2 + p1^2)

FAANG gotcha: Gini is usually faster than entropy in training because it avoids logarithm computations.

Task 1.2: Entropy

Formula: Entropy(S) = -sum_k p_k log2(p_k)

Binary case: Entropy(S) = -(p0 log2(p0) + p1 log2(p1))

Best split (decision stump)

Section 2 — Best split (decision stump)

Task 2.1: Evaluate impurity after threshold split

Split rule: go left if X[:,j] <= t else right. Return weighted impurity and information gain.

Task 2.2: Find best (feature, threshold)

FAANG gotcha: if a split makes an empty child, skip it.

Task 2.3: Train a stump and evaluate

Use best_split to build a stump that predicts majority class on each side.

sklearn DecisionTreeClassifier (sanity check)

Section 3 — sklearn DecisionTreeClassifier (sanity check)

Task 3.1: Train trees with different max_depth

Failure mode: leakage

Section 4 — Failure mode: leakage

Task 4.1: Create a leaky feature

Add a feature that is directly derived from y and watch validation accuracy jump.

Explain: why do trees exploit leakage aggressively?